The AI Gender Gap: Why Women Are Opting Out and How We Close it by 2030

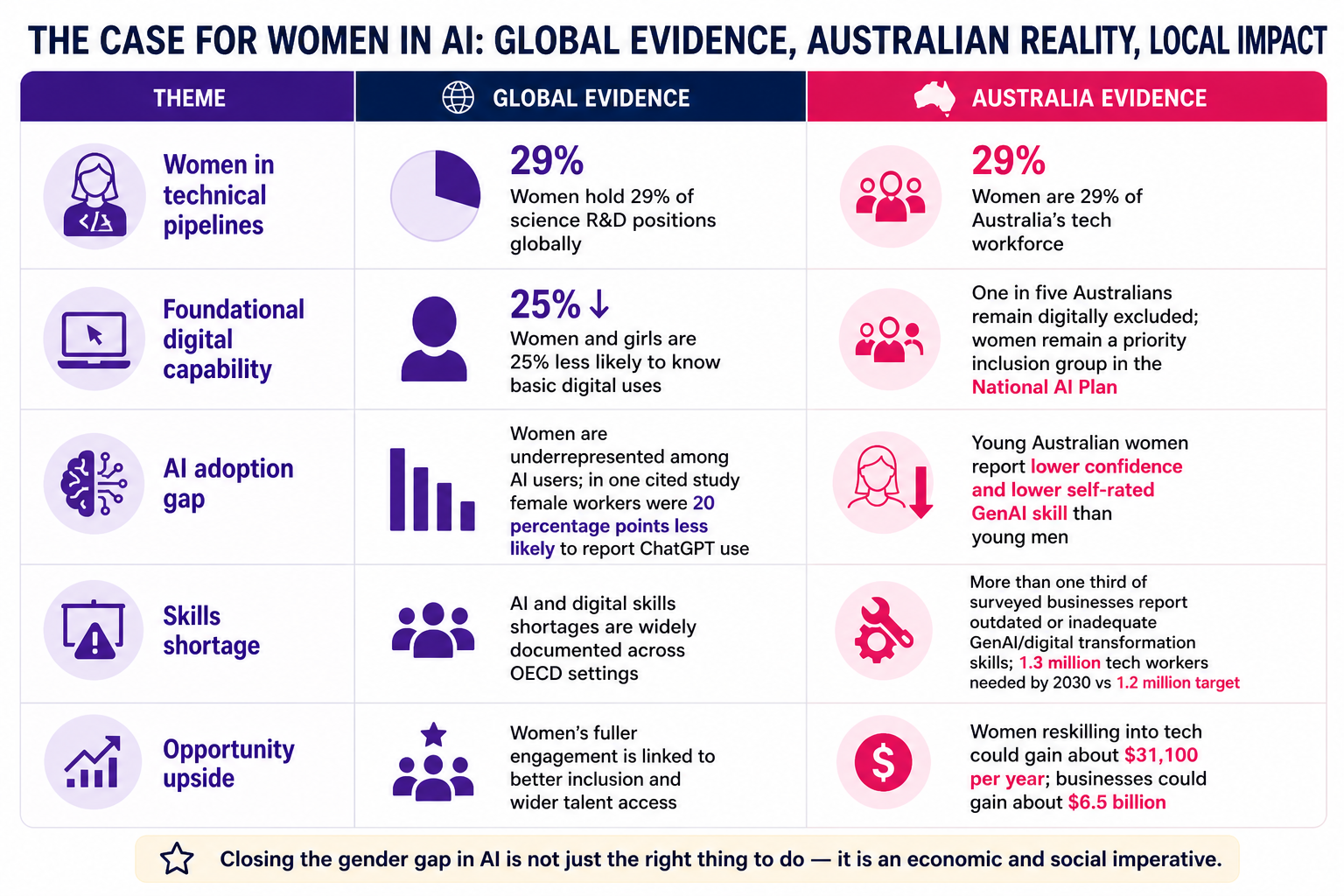

The evidence is increasingly clear. Women are participating in the AI transition at lower rates than men, but the gap is not best explained by lower capability. Instead, it is shaped by lower familiarity, lower self rated confidence, greater exposure to structural barriers, and stronger ethical and social risk concerns. Global evidence from UNESCO, OECD and official working papers shows that women remain underrepresented in AI research, AI related occupations and AI use, while also reporting more concern about privacy, misinformation, bias, labour market disruption and misuse. Recent workplace evidence adds a further constraint. When people are believed to have used AI, they can be judged as less competent, and that penalty is larger for women.

For Australia, this is not just an equity issue. It is a capability issue. The 2025 National AI Plan states that success should be measured by how widely AI benefits are shared and how inequalities are reduced. At the same time, it recognises that women are among the groups at higher risk of disruption, and that digital exclusion and regional divides remain real. Deloitte estimates that more than a third of surveyed businesses lack adequate or current generative AI and digital transformation skills, that Australia will need 1.3 million technology workers by 2030 against a government target of 1.2 million, and that women who reskill into technology roles could earn around $31,100 more per year while medium and large businesses gain an estimated $6.5 billion from a larger, more diverse talent pool.

The policy implication is straightforward. Closing the AI gender gap is not a “nice to have” diversity project. It is central to building an AI ready workforce, improving trust and safety, and ensuring that women and girls help shape AI rather than merely absorb its consequences. The most effective response is not a single programme but a coordinated agenda across policy, education, industry and community. Targeted AI literacy, gender disaggregated measurement, clear workplace norms, inclusive re-skilling pathways, regional access, and deliberate action on bias and safety.

Why this matters now

Generative AI is diffusing faster than previous waves of digital technology, and this creates both opportunity and risk. In the UK, Stephany & Duszyński (2026) show that women use generative AI less often than men not primarily because of lower access, but because they perceive its societal risks differently. In their nationally representative data, frequent personal use was 14.7% for women and 20.0% for men, a base gap of 5.3 percentage points. Among people concerned about mental health harms, the gap widened to 16.8 percentage points, driven by lower use among women rather than higher use among men. Their analysis explicitly suggests that AI related risk perception is one of the strongest predictors of women’s adoption behaviour.

That finding matters because it reframes women’s hesitation. It is not well described as simple resistance to technology. It is better understood as risk awareness shaped by lived experience of bias, weaker institutional support, and rational concerns about downside risk. This interpretation is consistent with workplace studies showing that women can face a larger social penalty for using AI and with surveys showing that women are less likely to adopt a tool by “trying first and learning later” when norms and safeguards are unclear.

Australia’s policy environment makes the urgency even sharper. The National AI Plan says success will be judged by whether inequalities are reduced. It also notes that around one in five Australians remain digitally excluded, that regional organisations lag metropolitan ones in AI adoption, and that women are among the groups most at risk of disruption from AI driven labour market change. Yet the plan largely treats “women” as a broad category and mentions “women and girls” most explicitly in the context of abuse and safety risks, rather than in a dedicated capability or pipeline strategy for girls and young women.

What the evidence says about adoption

Knowledge and familiarity

The starting point is uneven familiarity. The joint UNESCO/OECD/IDB report, The Effects of Artificial Intelligence on the Working Lives of Women (2022)notes that women hold only 29% of science R&D positions globally and are already 25% less likely than men to know how to use digital technology for basic purposes such as spreadsheet formulas. The same report links these gaps to weaker access to jobs, training and progression in AI related labour markets.

Newer OECD evidence shows that the gap persists in the AI era. In its 2024 policy brief Algorithm and Eve, the OECD reports that women are underrepresented in the AI workforce, among AI users, and among ICT graduates. It also cites evidence that female workers were 20 percentage points less likely than male workers in the same occupation to report using ChatGPT, with much of the difference still present after controlling for workplace and task mix. The OECD points to training needs and uneven access to AI-related opportunities as key explanations.

Empirical studies in higher education show a similar pattern. Gesser-Edelsburg and colleagues, in a study of Israeli higher education, found that men reported higher familiarity with AI tools than women, but that this did not map cleanly to usage, suggesting a self assessment gap rather than a simple capability gap. Rajkai, Dringó-Horváth & Nagy, studying Hungarian students, similarly found that women faced more barriers linked to lack of knowledge and lack of technical tools, while the broader Asian systematic review by Kalim et al. identifies technological illiteracy and lack of training as recurring barriers.

Confidence and Self Efficacy

Confidence gaps appear early and then compound. OECD data show that by age 15, less than 1.5% of girls across OECD countries aspire to become ICT professionals, compared with almost 10% of boys. The OECD also notes that boys often rate their own competence in maths and science more highly than girls with similar performance. This matters because self efficacy is a powerful predictor of later field of study, skill acquisition and career choice.

At the point of AI use, the same pattern remains visible. Jereb & Urh found that male university students were more likely to use both free and paid AI tools and to rate themselves as more skilled in using them. In their sample, male students’ average self rated skill score was higher than women’s, even though widespread educational use of tools such as ChatGPT had already normalised access. Youth Insight’s Australian study likewise found that young men reported higher confidence and higher self rated skill in using generative AI than young women.

Workplace evidence suggests that lower confidence can be entirely rational. In a 2025 pre registered experiment and accompanying field data, Gai, Hou & Tu found that only 41% of software engineers in a tech company had adopted a generative AI programming tool after twelve months, with lower adoption rates among female engineers. In the experiment, identical AI assisted work received competence ratings 9% lower on average, and the perceived penalty was larger for women than for men. This “competence penalty” creates a strong behavioural disincentive. If using AI makes women more likely to be judged as less capable, slower adoption is not irrational.

Ethical concerns and risk perception

Ethical concern is not peripheral to this story, it is central. The Harvard Business School summary of Otis, Delecourt, Cranney & Koning’s work reports that women adopt AI at materially lower rates in part because they are more likely to question whether its use is ethical and to worry that they will be judged harshly for relying on it. Even when access was equalised in their Kenyan field setting, women were still less likely to engage.

Australian youth data reinforce this. Youth Insight found that major concerns included cheating, misinformation, dependence and privacy, while women reported lower confidence in recognising AI generated content. More broadly, Good Things Australia’s found that Australian women were more likely than men to report difficulty telling AI generated content from real content, and less likely to turn to AI when something went wrong.

Taken together, the evidence supports a practical conclusion, women’s lower AI adoption is entwined with legitimate concerns about fairness, misuse, surveillance, misinformation and social judgement. Any serious response must therefore address both capability and trust. Training alone will help, but training without better norms, clearer rules and safer systems will leave the core problem intact.

Australia’s workforce and skills case

Australia’s workforce case is compelling. Deloitte’s 2025 Women in Technology analysis reports that more than a third of surveyed businesses lack adequate or current generative AI and digital transformation skills. It also estimates that Australia will need 1.3 million technology workers by 2030, compared with a government target of 1.2 million, leaving a shortfall of more than 100,000 roles. Deloitte further estimates that there are 661,300 women with a short term skilling pathway into technology.

At the same time, the existing tech workforce remains markedly imbalanced. ACS Digital Pulse 2024 reports that women made up only 29% of Australia’s tech workforce in 2023, that only 43% of women in tech were in managerial roles compared with 50% of men, and that an adjusted pay gap of $12,600 per year remained. The same report argues that progress over the last decade has been slow and that structural barriers still constrain participation and retention.

The National AI Plan adds a place based lens to this picture. It states that regional organisations adopt AI at lower rates than metropolitan organisations and that regional businesses are more likely to be unaware of AI opportunities. It also identifies women, First Nations people, mature aged workers, people with disability and those in regional areas as groups particularly exposed to disruption. That means the AI gender gap in Australia is not just about women in capital city office jobs. It is also about who can access trusted AI capability in regional communities, community services and smaller organisations.

Key statistics

The table below brings the highest confidence global and Australian indicators together.

Structural drivers and barriers

The gender gap is structural, not individual. OECD evidence shows that stereotypes emerge early, shape subject choices, depress girls’ aspirations towards ICT careers and reduce self efficacy even when performance differences are small. The Australian Youth in STEM report points in the same direction, showing lower confidence among girls and young women in several STEM domains, especially engineering and technology.

Later, those early inequalities are reinforced by labour market conditions. Deloitte points to gender norms, unclear skilling pathways, poor workplace culture, bias and discrimination as persistent barriers. ACS adds evidence of leadership and pay gaps, and notes that women in tech value diversity policies, visible support and safe reporting channels precisely because inclusive workplace conditions are not yet standard.

Cross context reviews show the same pattern. Kalim’s 2025 systematic review identifies technological illiteracy, cultural barriers, exclusion from policy design, privacy and moral concerns, and lack of training as recurring barriers to women’s AI adoption. In other words, the system often expects women to adapt to AI without first addressing the conditions that shape trust, access, confidence and safety.

Practical recommendations

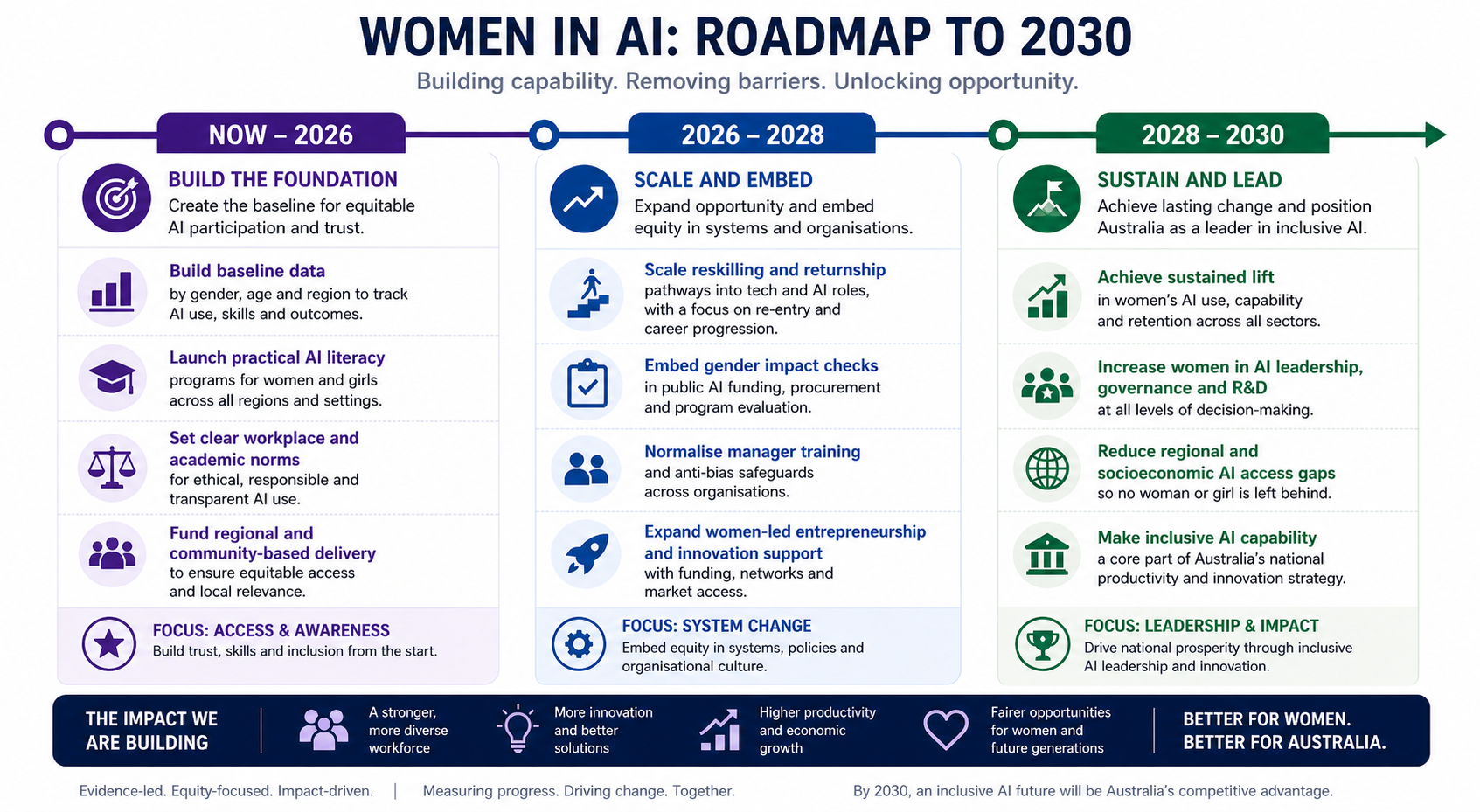

Policy should begin with measurement and accountability. Governments should publish gender-, age- and region-disaggregated data on AI awareness, use, training participation and labour market outcomes. Embed gender impact assessment into public AI funding, procurement and safety frameworks. Treat community based capability building as part of national AI infrastructure, not as an optional add-on. The National AI Plan already provides an inclusion mandate. What is needed now is sharper operationalisation for women and girls.

Education policy should move beyond narrow “learn to code” assumptions. Effective AI literacy for girls and women should combine practical use, prompting, verification, authorship, privacy, bias awareness and career pathways. Schools, TAFEs and universities should normalise ethical AI use through clear guidance, reduce ambiguity around “cheating”, expand role model visibility, and create targeted entry points for girls, women returners and non technical learners. OECD and student studies both suggest that confidence improves when expectations are explicit and learning is scaffolded.

Industry should stop treating adoption as a simple access problem. Employers need universal onboarding, protected learning time, clear norms on what kinds of AI use are encouraged, and manager training that reduces competence penalties. Retention matters as much as hiring. Returnships, reskilling pathways, internal sponsorship, flexible progression and bias audited performance systems are essential if women are to stay and advance in AI linked roles.

Community action is equally important, especially outside formal education and large firms. Trusted, local, beginner friendly programmes can help women build confidence, learn safely, and connect AI capability to real life goals such as work, parenting, entrepreneurship, government services and community participation. Australian evidence from Good Things Australia shows that women want support that feels practical, safe and relevant, especially where digital exclusion already exists.

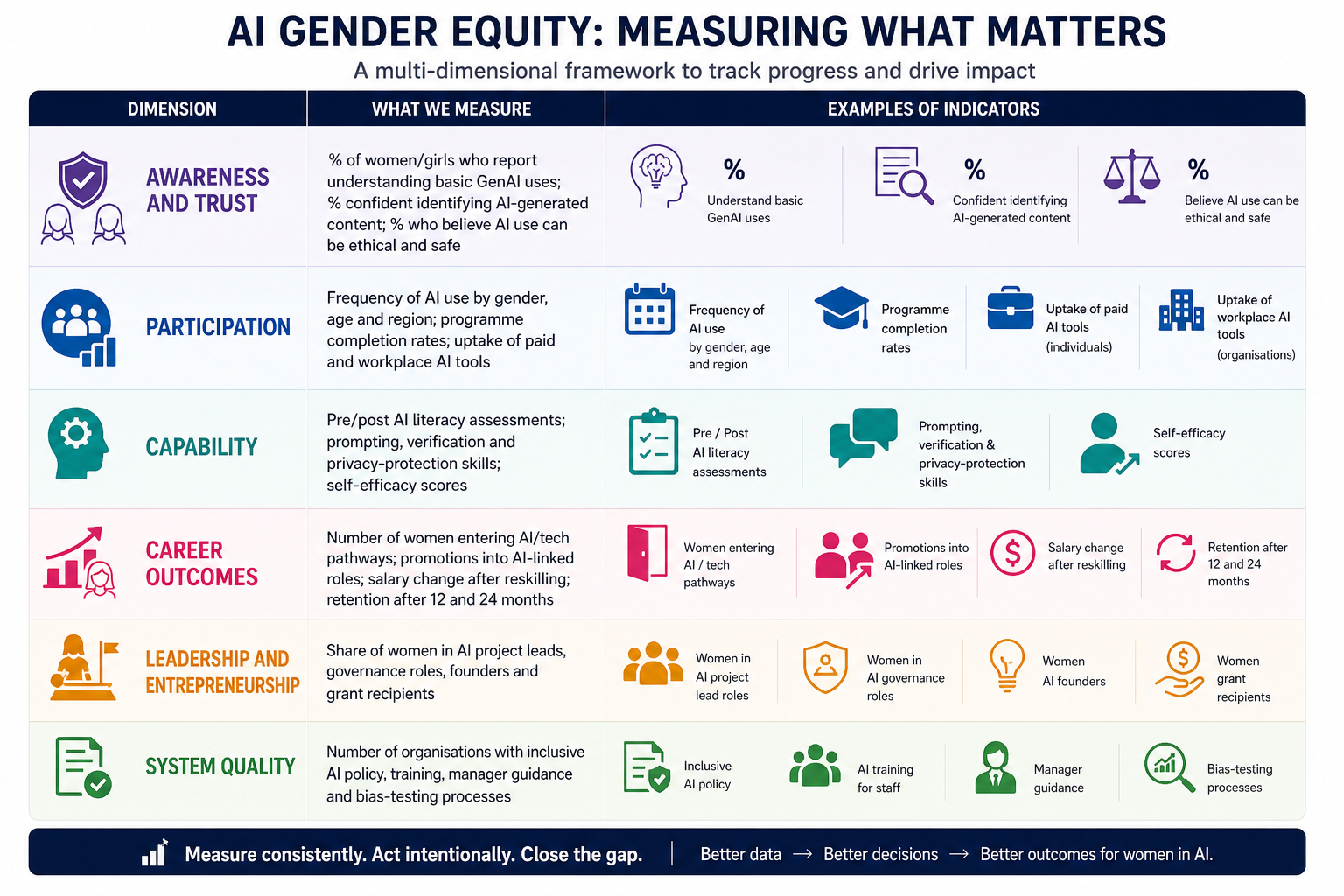

Measurement and implementation roadmap

A practical impact framework should track not only participation but also confidence, trust, progression and influence. Suggested indicators are set out below.

The roadmap below synthesises the peer reviewed evidence reviewed above into short-, medium- and long-term priorities.

Call to action

The central lesson from the evidence is simple. Women’s lower participation in AI is not a sign that they do not belong in this transition. It is a sign that the transition itself is being designed, explained and governed unevenly. When familiarity is lower, confidence is penalised, norms are ambiguous, and legitimate social risks remain unresolved, unequal adoption is the predictable result.

For Australia, the cost of inaction is twofold. Women and girls risk missing capability, career and leadership opportunities at the precise moment AI is reshaping productivity and work. At the same time, the country risks deepening its skills shortage and building AI systems that reflect too narrow a slice of society. Closing the gap is therefore both a gender equity imperative and a national capability strategy.

Women in AI Australia Ltd can use this moment to argue for a stronger national compact. Practical capability building, ethical clarity, safer systems, better data, and genuine inclusion from school to workplace to community.

References

Key sources used in this synthesis include the official and primary materials cited above, especially:

UNESCO, OECD and IDB (2022), The Effects of AI on the Working Lives of Women.

OECD (2024), Algorithm and Eve: How AI Will Impact Women at Work.

OECD (2024), OECD Digital Economy Outlook 2024, Volume 2 – chapter on women and digital innovation.

Department of Industry, Science and Resources (2025), National AI Plan.

Deloitte Access Economics / RMIT Online (2025), Women in Technology: How Skills and Talent Diversity Drive Business Success.

ACS (2024), Australia’s Digital Pulse 2024.

Gesser-Edelsburg et al. (2024), study of AI and GenAI familiarity and use in Israeli higher education.

Jereb and Urh (2024), The Use of Artificial Intelligence among Students in Higher Education.

Rajkai, Dringó-Horváth and Nagy (2025), Artificial Intelligence in Higher Education: Students’ AI Use and Its Influencing Factors.

Kalim et al. (2025), Barriers to AI Adoption for Women in Higher Education: A Systematic Review of the Asian Context.

Gai, Hou and Tu (2025), Competence Penalty Is a Barrier to the Adoption of New Technology; related HBR summary by Acar et al. (2025).

Stephany and Duszyński (2026), Women Worry, Men Adopt: How Gendered Perceptions Shape the Use of Generative AI.

Good Things Australia (2026), National media release and supporting survey on the AI gender divide in Australia.

Youth Insight / Student Edge (2023), Young People’s Perception and Use of Generative AI.